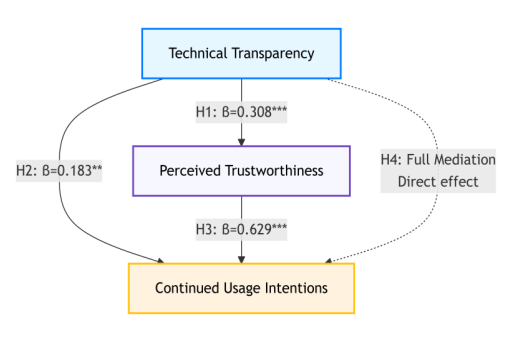

As intelligent tutoring systems (ITS) become increasingly embedded in higher education, understanding the psychological mechanisms driving learners’ continued engagement is essential. Drawing on transparency research, trust theory, and innovation literacy frameworks, this study develops and validates a mediated model linking technical transparency, perceived trustworthiness, and continued usage intentions. A total of 303 valid responses were collected from university students, and hypotheses were tested using correlation analysis, hierarchical multiple regression, and Hayes’ PROCESS Macro (Model 4) in SPSS with 5,000 bootstrap resamples (a robust approach for mediation effect verification). Results show that technical transparency significantly enhances students’ perceived trustworthiness of ITS (β=0.308, p<0.001) and initially exhibits a direct positive effect on continued usage intentions (β=0.183, p<0.01). Perceived trustworthiness, in turn, exerts a strong positive influence on continued usage intentions (β=0.629, p<0.001). Critical to the updated mediation analysis: When perceived trustworthiness is included as a mediator, the direct effect of technical transparency on continued usage intentions becomes non-significant. PROCESS results confirm full mediation—with the indirect effect (technical transparency→perceived trustworthiness→continued usage intentions) being significant (95% CI [0.138, 0.305], excluding 0)—indicating transparency fosters sustained engagement primarily by enhancing students’ confidence in the system’s reliability, fairness, and pedagogical integrity. The study contributes to intelligent learning research by: (1) empirically establishing transparency as a key antecedent of trust in AI-based learning environments; (2) validating trust as the core psychological mechanism sustaining long-term ITS use via rigorous PROCESS-mediated testing; (3) linking trustworthy ITS design to innovation literacy cultivation. Practical implications and future directions are discussed to support transparent, trustworthy, and learner-centered ITS development.

1.1. Research Background

The integration of artificial intelligence (AI) technologies into higher education has fundamentally reshaped teaching and learning paradigms over the past decade. Digital transformation has moved beyond simple content delivery toward adaptive, personalized, and data-driven instruction that allows learners to receive tailored feedback and flexible learning pathways. Among various AI-enabled innovations, intelligent tutoring systems (ITS) have become a focal point of this transformation because they simulate the adaptive guidance traditionally provided by human tutors while maintaining scalability across diverse learning environments. ITS are now deployed in disciplines ranging from mathematics and programming to language learning, where they provide individualized

assessment, real-time diagnostic feedback, and continuous instructional support [1,2].

Despite these technological advancements, consistent and long-term adoption by students remains elusive. In practice, many learners discontinue or only sporadically engage with ITS after initial exposure, suggesting that technological sophistication alone cannot guarantee sustained educational impact. Scholars increasingly attribute this discontinuity to a trust gap—the disparity between users’ expected and actual levels of trust in intelligent educational technologies. When students perceive system recommendations as opaque, unpredictable, or biased, their confidence in both the system and their own learning outcomes diminishes, leading to reduced motivation and engagement [3].

Empirical studies have identified several antecedents of this trust gap. Jiang, Li, and Tang (2024) observed that insufficient algorithmic transparency, ambiguous feedback mechanisms, and unresolved concerns regarding data privacy are principal factors reducing learners’ trust [4]. A 2024 survey by the China Institute of Intelligent Education revealed that more than sixty percent of ITS users expressed apprehension about personal-data usage and ethical accountability, directly influencing their willingness to continue using the systems. Similar findings in global contexts demonstrate that users’ trust is a decisive psychological mechanism shaping adoption and continued utilization of educational technologies [5]. Thus, while AI-driven systems promise unprecedented personalization and efficiency, their pedagogical potential depends on users’ sustained trust in both technological integrity and educational fairness.

From an institutional perspective, this issue also intersects with broader reform agendas such as innovation-driven education and high-quality talent cultivation. As Chinese universities advance toward Education 5.0 and emphasize digital ethics and innovation competence [6], the reliability and transparency of intelligent learning environments become essential conditions for nurturing reflective and creative learners. In this context, bridging the trust gap is not solely a matter of improving user retention; it represents a pathway to enabling innovation literacy through trustworthy human–AI collaboration.

1.2. Research Problem

Although the functional performance of ITS determines their technical feasibility, user trust ultimately defines their educational efficacy. A deficiency in perceived transparency can erode learners’ confidence in system reliability, thereby weakening behavioral engagement and intrinsic motivation [7]. When algorithmic decisions or feedback rationales remain unclear, learners may interpret system outputs as arbitrary or biased, prompting withdrawal from deeper cognitive engagement. This dynamic reveals that technological acceptance is inseparable from the socio-psychological conditions under which learning occurs.

From an educational standpoint, erosion of trust produces two intertwined consequences. First, it constrains the learner’s willingness to persist in self-regulated learning cycles supported by AI systems. Second, it hampers the cultivation of reflective inquiry and innovation-oriented thinking—skills regarded as the cornerstone of innovation literacy. Transparent and trustworthy learning environments encourage students to interrogate, interpret, and reconstruct knowledge rather than passively consume information. As recent research on innovation and entrepreneurship education suggests, innovation competence emerges from reflective engagement, ethical awareness, and sustained exploration [1,8,9]. Therefore, ensuring system transparency is not merely a technical imperative but also a pedagogical one that directly influences the formation of innovation-relevant capacities.

Consequently, the central research problem of this study concerns the mechanism through which technical transparency the extent to which users can comprehend, trace, and evaluate the logic of an intelligent system affects perceived trustworthiness, and how this relationship shapes users continued usage intentions. Understanding this chain of influence is critical for designing intelligent tutoring systems that both sustain learner engagement and contribute to the broader educational mission of cultivating innovative, autonomous, and ethically responsible graduates.

1.3. Research Gap

Despite a growing body of research on technology acceptance and user trust, several key gaps remain in the context of intelligent tutoring systems and higher education.

(1) Contextual Limitation. Most previous studies have examined trust in commercial or general-purpose AI applications, such as recommendation engines or social-media algorithms, where success is measured by consumer satisfaction or retention rates. Comparatively few have investigated educational environments in which trust shapes learning persistence and innovation competence. The distinctive pedagogical objectives and ethical responsibilities inherent to higher education make it essential to analyze trust formation within ITS specifically.

(2) Mechanistic Ambiguity. Although transparency is widely acknowledged as a precursor to trust, the causal processes through which technical transparency influences perceived trustworthiness and continued engagement remain insufficiently validated. Empirical evidence confirming the mediating function of perceived trustworthiness is especially scarce in educational technology research, leaving theoretical propositions under-tested and potentially context-dependent.

(3) Educational Implication Deficit. Existing models frequently conceptualize continued usage merely as behavioral intention, without linking it to deeper educational outcomes such as reflective inquiry, ethical reasoning, or innovation literacy cultivation. This limitation restricts understanding of how trust-related mechanisms contribute to learners’ broader cognitive and creative development—dimensions emphasized in China’s “innovation entrepreneurship–high-quality talent” framework.

Bridging these gaps necessitates an integrated approach that simultaneously explains the behavioral, psychological, and educational implications of trust in intelligent tutoring systems. Specifically, research must illuminate how transparent and explainable AI design principles can promote both sustained engagement and innovation-oriented learning behaviors.

1.4. Research Purpose and Questions

The primary objective of this study is to construct and empirically validate a mediated model explaining the relationships among technical transparency, perceived trustworthiness, and continued usage intentions within intelligent tutoring systems. Building upon the Unified Theory of Acceptance and Use of Technology (UTAUT), the study extends traditional technology-acceptance perspectives by embedding them within an educational-innovation framework. The model postulates that when learners perceive high levels of technical transparency—manifested through explainability, traceability, and accountability—they are more likely to develop trust in the system. This trust, in turn, strengthens their intention to continue engaging with ITS and facilitates reflective and innovation-oriented learning processes.

To achieve these objectives, the study addresses the following research questions:

RQ1: Does perceived technical transparency of AI-based educational systems significantly affect students’ continued usage intentions?

RQ2: Does perceived trustworthiness mediate the relationship between perceived technical transparency and students’ continued usage intentions?

By examining these questions through quantitative methods, this research aims to clarify the psychological mechanisms connecting transparency, trust, and sustained engagement in intelligent learning environments. In doing so, it provides empirical evidence for designing trustworthy educational technologies that not only encourage continuous system use but also contribute to the cultivation of innovation literacy among university students. The findings are expected to inform both theoretical discourse and institutional practice, offering insights for policymakers, educators, and developers seeking to align intelligent tutoring systems with the overarching goals of innovation-driven higher-education reform.

1.5. Research Significance

1.5.1. Theoretical Contributions

This study advances the theoretical understanding of trust formation and user engagement in intelligent tutoring systems (ITS) by integrating constructs from technology-acceptance theory with the educational paradigm of innovation literacy cultivation—while sharpening its novelty by addressing three critical gaps in existing literature, all grounded in the unique context of educational AI and China’s innovation-driven policy framework. This study addresses by reconceptualizing technology acceptance as a process mediated by users’ integrated epistemic and moral judgments. Unlike most prior research, which examines transparency–trust–intention dynamics in commercial AI applications (e.g., recommendation engines, social media algorithms) where success is measured by consumer satisfaction, this study anchors its model in the distinctive context of ITS. Here, user trust and engagement are not merely behavioral outcomes but prerequisites for learning persistence and competence development—aligning with the pedagogical goals of higher education rather than commercial utility.

Furthermore, this study expands the discourse on digital trust by empirically validating perceived trustworthiness as a mediator between transparency and sustained engagement. Previous work has often operationalized trust as a singular construct focused on system competence [10] or treated it as a black-box antecedent to behavior [7]. This nuanced approach not only confirms that transparency exerts influence indirectly through trust [11] but also clarifies what kind of trust matters for ITS: trust that integrates psychological confidence and moral assurance.

A third theoretical novelty lies in linking this transparency–trust–intention mechanism to China’s national innovation-driven education agenda (e.g., Education 5.0, Innovation-Driven Talent Cultivation) [6]. While the empirical model does not measure innovation literacy directly, it establishes how ITS design (transparency) and user psychology (trust) can enable the sustained interaction necessary for cultivating innovation-oriented learning behaviors—filling a gap between technology acceptance theory and China’s strategic goals for high-quality talent development.

1.5.2. Practical Implications

From a practical standpoint, the findings provide actionable insights for educators, system designers, and institutional administrators—all aligned with China’s innovation-driven education policies—while tempering claims about direct links to innovation literacy by framing continued usage as a behavioral proxy for deeper engagement. First, the empirical evidence that transparency improves perceived trustworthiness suggests that ITS developers should prioritize explainable and interpretable design. Transparent interface features—such as visualized learning analytics, traceable recommendation rationales, and user-controlled data permissions—can foster students’ sense of agency and accountability, thereby sustaining engagement [4]. Implementing these design principles not only aligns technological advancement with the ethical imperatives of data governance and privacy protection but also creates conditions for students to engage critically with AI—an essential habit for innovation-oriented learning.

Second, for educators, the research underscores the pedagogical significance of building trustful learning climates. When students trust the feedback mechanisms of intelligent systems, they are more likely to adopt reflective learning strategies, engage in self-evaluation, and persist in problem-solving tasks [12]—behaviors that serve as precursors to innovation literacy, even if they do not directly equate to it [12]. Integrating transparency education—through discussions of algorithmic logic, bias detection, and digital ethics—into course design can further help students develop balanced trust (neither naive reliance nor excessive skepticism). This trust-building pedagogy transforms ITS use from passive tool adoption into active, reflective practice—laying the groundwork for the creative and critical thinking needed for innovation.

Third, institutional decision-makers can apply these findings to develop governance frameworks that balance innovation with accountability, aligning with national strategies promoting innovation entrepreneurship and high-quality talent development [9]. Establishing guidelines for transparency reporting, data ethics training, and user feedback mechanisms not only strengthens public confidence in AI-based education but also ensures that ITS implementation supports, rather than diverts from, innovation literacy goals. For example, universities might use the study’s transparency–trust insights to evaluate ITS not just on technical performance, but on their ability to foster sustained, reflective engagement—a behavioral proxy for the innovation-oriented learning that China’s education reform prioritizes.

By translating empirical results into context-specific practices, this research helps ensure that intelligent tutoring systems become sustainable instruments of educational transformation—tools that do not merely enhance usage, but lay the groundwork for the reflective, ethical, and persistent engagement that underpins innovation literacy in higher education.

2.1. Conceptualizing the Psychological–Behavioral Chain of Transparency, Trust, and Usage Intention

In the context of intelligent tutoring systems (ITS), user behavior is shaped by a dynamic psychological chain that connects technical transparency, perceived trustworthiness, and continued usage intention [13]. This chain not only determines whether learners sustain their interaction with AI‐driven learning environments but also reflects how they internalize technological experiences into self‐regulated and innovation-oriented learning dispositions. While previous studies have primarily interpreted these variables through the lens of technology acceptance, recent educational research emphasizes their broader pedagogical significance—particularly their role in cultivating reflective, creative, and ethically conscious learners [14,15].

Understanding this psychological and behavioral chain is thus essential for bridging the functional design of ITS with the educational mission of innovation literacy cultivation. In this framework, technical transparency functions as a cognitive enabler that stimulates reflective awareness; perceived trustworthiness acts as an affective and ethical mediator that transforms understanding into confidence; and continued usage intention serves as a behavioral manifestation of innovation engagement and persistence. The following sections review each construct in turn and explain how their interactions contribute to innovation‐driven talent development.

2.2. Technical Transparency as a Cognitive Foundation for Reflective and Innovative Learning

2.2.1. Definition and Dimensions

Technical transparency refers to the degree to which the processes, algorithms, and decision logic of an intelligent tutoring system are open, interpretable, and understandable to users [16,17]. It encompasses two key dimensions: explainability—the clarity with which system operations and recommendations can be articulated—and traceability—the capacity for users to examine the underlying data and computational logic that produce system outputs. Transparent systems enable users to comprehend how recommendations are generated, thus enhancing the perceived fairness and reliability of AI-assisted learning environments.

In pedagogical settings, transparency functions as more than a technical property; it constitutes a learning condition that facilitates epistemic access. When students are informed about how the system analyzes their input and generates feedback, they acquire meta-cognitive insight into both their learning processes and the functioning of the AI system itself [18]. Such comprehension promotes analytical reasoning, self-monitoring, and the ability to question automated judgments—skills that align closely with innovation literacy.

2.2.2. Transparency and Innovation Literacy Cultivation

From an educational perspective, technical transparency provides the cognitive foundation for cultivating innovation literacy. Innovation literacy refers to learners' capacity to think creatively, solve complex problems, and engage ethically in technology-mediated environments [19]. Transparent system design encourages students to move beyond passive consumption of information toward active inquiry and reflection. By making algorithmic reasoning visible, transparency transforms the learning process into an iterative dialogue between human cognition and machine intelligence. This dialogic relationship nurtures critical questioning, promotes adaptability, and fosters the capacity to integrate multiple perspectives—core competencies of innovation-oriented education [20].

Moreover, transparency enhances students' trust calibration skills, enabling them to balance reliance on and skepticism toward automated guidance. Such balanced trust reflects innovation ethics—the ability to apply technology responsibly and critically. Hence, technical transparency is not only instrumental for system adoption but also indispensable for nurturing the intellectual curiosity and ethical reflexivity required for high-quality talent formation.

2.3. Perceived Trustworthiness as an Affective and Ethical Mediator

2.3.1. Conceptualization of Perceived Trustworthiness

Perceived trustworthiness represents users' belief that an intelligent tutoring system is competent, reliable, and aligned with their learning interests [10]. It encompasses both cognitive assessments of system capability and affective judgments regarding benevolence and integrity [11]. In educational contexts, this construct transcends functional trust, incorporating moral and emotional dimensions that influence how learners internalize AI as a partner in knowledge construction.

Scholars generally differentiate between competence-based trust—confidence in the system's capacity to deliver accurate and relevant feedback—and benevolence-based trust—the perception that the system acts in the user's best educational interest [21,22]. Both dimensions jointly determine whether students interpret the system as a credible mentor or as a mechanical evaluator.

2.3.2. Trust Formation and Educational Confidence

Perceived trustworthiness operates as an affective bridge that connects technical understanding with behavioral engagement. Transparency enhances trust because it reduces cognitive uncertainty and promotes ethical assurance [4]. When learners understand how algorithms process their data, they develop a sense of agency and psychological safety. This confidence, in turn, stimulates intellectual risk-taking—an essential precursor to creative learning behaviors.

From the standpoint of innovation literacy cultivation, perceived trustworthiness signifies more than system acceptance; it represents educational confidence—the learner's belief in their ability to collaborate meaningfully with AI to achieve creative outcomes. A trustworthy ITS allows students to explore complex problems without fear of inequity or bias, encouraging experimentation and sustained inquiry. In this sense, trustworthiness serves both a psychological and moral function: it motivates engagement while ensuring that the engagement adheres to ethical standards of fairness and accountability.

2.3.3. Trust, Reflection, and Innovation Competence

The relationship between trust and innovation is iterative. When learners trust the pedagogical intent of AI, they are more likely to engage in reflective evaluation of its feedback, transforming technological interaction into cognitive co-creation. Repeated cycles of interaction, evaluation, and refinement foster innovation competence, which entails the ability to apply knowledge creatively, think systemically, and generate novel solutions [23,24]. Thus, perceived trustworthiness not only mediates the link between transparency and usage but also functions as the emotional and ethical substrate of innovation-oriented learning.

2.4. Continued Usage Intention as a Behavioral Manifestation of Innovation Engagement

2.4.1. Definition and Behavioral Meaning

Continued usage intention is defined as the user's deliberate willingness to persist in using a technological system over time [25]. In the domain of educational technology, this persistence reflects not merely repetitive use but sustained cognitive and emotional investment in technology-mediated learning. Continued usage signifies that learners perceive enduring value, relevance, and personal growth in interacting with ITS.

2.4.2. From Behavioral Persistence to Innovation-Oriented Engagement

Sustained interaction with ITS facilitates the development of self-regulated learning habits. Students who repeatedly engage with transparent and trustworthy systems tend to internalize feedback mechanisms, develop goal-setting strategies, and refine their approach to complex problem-solving [26]. These iterative learning cycles embody innovation engagement—the behavioral dimension of innovation literacy. Rather than using AI passively, learners transform their engagement into a process of experimentation, reflection, and creative synthesis.

Moreover, continued usage is strongly associated with learning resilience, the ability to sustain motivation in the face of uncertainty and challenge. In innovation and entrepreneurship education, resilience is considered a hallmark of innovative talent because it reflects persistence in exploration and adaptability to change [27,28]. Therefore, continued usage intention represents a measurable behavioral outcome that captures the learner's evolving innovative disposition.

2.4.3. Continued Usage and High-Quality Talent Cultivation

At the institutional level, fostering students' continued engagement with intelligent tutoring systems contributes directly to the strategic goal of cultivating high-quality, innovation-driven talent. Sustained interaction with AI environments encourages interdisciplinary thinking and lifelong learning—competencies increasingly demanded by knowledge economies. Consequently, continued usage intention serves as both an evaluative indicator of system success and an educational proxy for students' commitment to continuous innovation learning.

2.5. Integrating the Constructs: From Technological Acceptance to Innovation Literacy

2.5.1. Theoretical Integration

The interrelationships among technical transparency, perceived trustworthiness, and continued usage intention can be interpreted as a sequential process that transforms cognitive understanding into affective confidence and, ultimately, into behavioral commitment. Transparency enables learners to grasp how the system functions, thereby reducing uncertainty and enhancing comprehension. This cognitive clarity fosters trust, which motivates learners to engage persistently and reflectively with the system. Over time, sustained engagement cultivates innovation-related competencies such as critical thinking, creativity, and adaptive problem-solving.

This progression mirrors the pedagogical logic of innovation literacy cultivation:

Cognitive Phase (Understanding Transparency): Learners acquire insight into algorithmic mechanisms, promoting reflective awareness.

Affective Phase (Building Trust): Learners develop ethical confidence and a sense of partnership with technology.

Behavioral Phase (Sustained Engagement): Learners internalize innovation-oriented behaviors through iterative practice.

Hence, the transparency–trust–intention chain not only describes user behavior but also maps the developmental pathway from comprehension to innovation capacity.

2.5.2. Educational and Theoretical Implications

Positioning the transparency–trust–intention model within the framework of innovation education expands its theoretical relevance beyond traditional technology-acceptance paradigms. It illustrates that users' continued engagement with intelligent systems is not an end in itself but a means to foster reflective learning and innovation literacy. In doing so, it bridges behavioral science, educational psychology, and innovation policy.

The model also provides a theoretical foundation for aligning technological development with educational reform initiatives such as Education Digitalization 2035 and Innovation-Driven Talent Cultivation in China. By conceptualizing trust as both a psychological state and a pedagogical mechanism, this study underscores that technology adoption must be accompanied by ethical transparency and reflective learning practices to produce genuine innovation capacity.

2.5.3. Toward Innovation-Driven Education Reform

Ultimately, the integration of transparency, trust, and sustained usage intention establishes a conceptual bridge between AI system design and innovation-oriented pedagogy. When transparency informs system architecture, when trust mediates learner interaction, and when sustained engagement reflects cognitive transformation, intelligent tutoring systems can function as catalysts for innovation-driven education reform. They enable learners to experience technology not as an external authority but as a collaborative partner in the co-construction of knowledge and creativity.

In this sense, the model provides not only a behavioral explanation of user engagement but also a normative direction for educational innovation: the development of transparent, trustworthy, and participatory learning ecosystems that cultivate the reflective, ethical, and innovative qualities of high-quality talent.

2.6. Hypotheses Development

Drawing on the theoretical discussions above, the proposed research model integrates the psychological and behavioral chain of technical transparency–perceived trustworthiness–continued usage intention within the educational framework of innovation literacy cultivation. The model assumes that technological transparency enhances learners' understanding of system operations, thereby fostering trust and promoting sustained engagement. This sequential process reflects not only a behavioral adoption pathway but also a developmental progression from cognitive comprehension to innovation-oriented learning behavior. The following hypotheses are therefore proposed.

2.6.1. Technical Transparency and Perceived Trustworthiness

Technical transparency is expected to positively influence users' perceived trustworthiness of intelligent tutoring systems. When system processes and decision logics are made explicit and interpretable, learners are more likely to perceive the system as competent, reliable, and ethically accountable [4,16]. Transparent interaction reduces cognitive uncertainty, strengthens ethical assurance, and supports the formation of trust as both a psychological and moral evaluation.

H1: Technical transparency will significantly enhance users' perceived trustworthiness of intelligent tutoring systems.

2.6.2. Technical Transparency and Continued Usage Intention

Transparency also plays a critical role in motivating learners' continued engagement with intelligent tutoring systems. By facilitating understanding and control, transparent systems promote reflective learning and self-regulated interaction, which in turn increase students' willingness to sustain participation. From the perspective of innovation literacy, this behavioral continuity reflects learners' curiosity, adaptability, and commitment to iterative learning—core elements of innovative competence [17,29].

H2: Technical transparency will significantly enhance users' willingness to continue engaging with intelligent tutoring systems.

2.6.3. Perceived Trustworthiness and Continued Usage Intention

Perceived trustworthiness serves as an affective and ethical mediator that converts cognitive understanding into behavioral persistence. When learners trust the system's fairness and pedagogical intent, they are more inclined to rely on its feedback, explore challenging tasks, and reflect upon their own performance. This sustained interaction mirrors the transition from confidence to innovation-oriented engagement, demonstrating that trust is a prerequisite for creativity and perseverance in technology-mediated learning [10,11].

H3: Users' perceived trustworthiness of intelligent tutoring systems will significantly enhance their willingness to continue using them.

2.6.4. The Mediating Role of Perceived Trustworthiness

While transparency may directly encourage continued engagement, its influence is expected to operate primarily through perceived trustworthiness. Transparent system design increases learners' comprehension and reduces uncertainty, which subsequently strengthens trust and promotes sustained interaction. This mediating mechanism reflects the transformation from cognitive awareness to affective assurance and ultimately to behavioral persistence—an educational trajectory that underpins innovation literacy cultivation.

H4: Users' perceived trustworthiness of intelligent tutoring systems mediates the relationship between technical transparency and their continued willingness to use such systems.

2.6.5. Summary of Theoretical Logic

The hypothesized relationships collectively illustrate a sequential pathway through which transparency fosters trust, and trust sustains engagement, thereby contributing to the development of reflective, autonomous, and innovative learners. The model thus aligns with innovation-oriented education by emphasizing that technological transparency and trust are not merely determinants of usage behavior, but essential enablers of innovation literacy cultivation in intelligent learning environments.

3.1. Research Framework

The research framework was developed to empirically examine the psychological and behavioral mechanisms that connect technical transparency, perceived trustworthiness, and continued usage intention within intelligent tutoring systems (ITS), and to interpret these mechanisms in the context of innovation literacy cultivation. Drawing on the Unified Theory of Acceptance and Use of Technology (UTAUT), the model extends beyond traditional technology-acceptance perspectives by embedding educational and ethical dimensions relevant to innovation-driven learning.

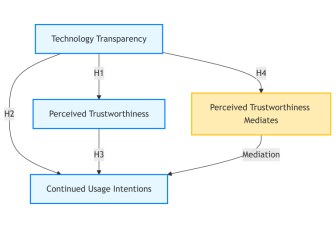

As illustrated in Figure 1, the conceptual model posits that technical transparency serves as the cognitive antecedent that shapes users' perceptions of trustworthiness, which in turn functions as an affective mediator influencing continued usage intention. The model reflects a sequential developmental logic—moving from cognitive comprehension (understanding transparency), to affective confidence (trust formation), and finally to behavioral persistence (continued engagement). This sequence parallels the educational trajectory of innovation literacy, which evolves from reflective understanding to ethical trust and innovative action.

Accordingly, the study empirically tests four hypotheses derived from this theoretical structure:

H1: Technical transparency will significantly enhance users' perceived trustworthiness of intelligent tutoring systems.

H2: Technical transparency will significantly enhance users' willingness to continue engaging with intelligent tutoring systems.

H3: Users' perceived trustworthiness of intelligent tutoring systems will significantly enhance their willingness to continue using them.

H4: Users' perceived trustworthiness of intelligent tutoring systems mediates the relationship between technical transparency and their continued willingness to use such systems.

The framework thereby integrates technological, psychological, and educational dimensions into a unified analytical model for understanding how intelligent tutoring systems contribute to innovation-oriented learning.

Figure 1. The conceptual framework of research.

3.2. Operational Definition and Measurement of Variables

3.2.1. Technical Transparency

Technical transparency is defined as the extent to which an intelligent tutoring system communicates its algorithmic logic, feedback rationale, and data utilization processes in a manner that users can comprehend and evaluate [16,17]. In educational contexts, transparency represents not only a system property but also a cognitive scaffold that enables learners to critically interpret automated feedback and make informed learning decisions.

From the perspective of innovation literacy cultivation, transparency supports reflective learning by encouraging students to question system-generated outcomes and to understand the reasoning underlying algorithmic judgments. Such interpretive awareness fosters epistemic curiosity and critical thinking—key components of innovative competence.

This construct was assessed using three adapted items from prior studies on AI transparency [11,18]. Respondents rated statements such as “The intelligent tutoring system provides clear explanations for its recommendations” and “I can trace how the system evaluates my learning progress” on a 5-point Likert scale (1 = strongly disagree, 5 = strongly agree). Higher scores indicate greater perceived transparency and cognitive clarity.

3.2.2. Perceived Trustworthiness

Perceived trustworthiness refers to the learner's belief that the intelligent tutoring system is reliable, fair, and designed with educational benevolence [7,10]. It encompasses perceptions of system competence, integrity, and ethical accountability.

Within the framework of innovation literacy, trustworthiness functions as an affective–ethical bridge linking understanding with engagement. A trustworthy learning environment enables students to engage in risk-taking, experimentation, and reflective evaluation without fear of bias or data misuse. Hence, trust operates as a psychological condition for cultivating educational confidence—the willingness to explore and innovate through collaboration with intelligent systems.

Perceived trustworthiness was measured through five items adapted from the System Trustworthiness Scale [10]. Example statements include: “The system provides reliable and unbiased feedback,” and “The system protects users' privacy and data security.” Responses were captured using a 5-point Likert scale (1 = strongly disagree, 5 = strongly agree). Higher scores denote stronger perceptions of competence, fairness, and accountability.

3.2.3. Continued Usage Intention

Continued usage intention is defined as the learner's deliberate willingness to persist in using an intelligent tutoring system for future learning [25]. It represents not only behavioral intention but also motivational persistence in technology-mediated learning contexts.

In innovation-oriented education, continued usage intention reflects a learner's behavioral manifestation of innovation engagement—the consistent application of reflective, adaptive, and creative learning strategies in collaboration with AI systems. Sustained engagement with transparent and trustworthy systems facilitates iterative learning cycles, resilience, and curiosity—behaviors characteristic of innovation literacy.

This construct was measured through three items adapted from prior educational technology research [26]. Example statements include: “I plan to continue using the intelligent tutoring system for future courses” and “I would recommend others to use the system.” All items employed a 5-point Likert scale. Higher aggregate scores indicate stronger behavioral commitment and sustained motivation.

3.2.4. Control Variables

To ensure analytical precision, four demographic factors were included as control variables: gender, academic grade, faculty discipline, and duration of ITS usage experience. These variables account for potential variations in technological familiarity, cognitive style, and disciplinary orientation that may influence user engagement or trust perceptions.

3.3. Questionnaire Design and Data Analysis Methods

3.3.1. Questionnaire Design and Sampling Procedures

A structured self-administered questionnaire was employed as the primary data collection instrument. All measurement items were adapted from validated scales, translated, and contextually refined for higher-education learners in China. Each statement utilized a 5-point Likert scale ranging from 1 (“Strongly Disagree”) to 5 (“Strongly Agree”). Higher composite means represent stronger alignment with the construct being measured.

Data were collected between May and June 2025 through the online platform Questionnaire Star, following a convenience sampling strategy targeting students with prior experience using the AI tutoring system developed by Wuhan Zhixiang Culture Technology Co., Ltd. Out of 348 collected responses, 48 were excluded due to incomplete answers or patterned responses, resulting in 303 valid questionnaires (valid response rate = 87.07%). This sample size meets the requirements for regression-based mediation analysis.

3.3.2. Data Analysis Procedures

Data analysis was conducted using IBM SPSS Statistics 28.0 and its Hayes' PROCESS Macro (Version 4.1), following a multi-step analytical procedure aligned with the research hypotheses to ensure both basic relationship verification and robust mediation effect testing.

(1) Descriptive and Preliminary Analysis

Descriptive statistics were calculated to summarize demographic profiles and mean construct scores. Normality, multicollinearity, and outlier checks were performed to ensure data integrity.

(2) Reliability and Validity Testing

Internal consistency reliability was evaluated using Cronbach's α coefficients, with values above 0.7 indicating satisfactory reliability. Construct validity was assessed through exploratory factor analysis (EFA), examining factor loadings (> 0.7), composite reliability (CR > 0.7), and average variance extracted (AVE > 0.5). These indicators confirmed that all scales exhibited strong convergent validity and discriminant reliability.

(3) Hypothesis Testing

To comprehensively examine the hypothesized relationships (especially the mediating role of perceived trustworthiness), a two-step approach combining traditional and modern mediation testing methods was adopted:

① Hierarchical Multiple Regression. This step was used to verify the basic path relationships required for mediation:

Step 1: Enter control variables (gender, grade, faculty discipline, ITS usage experience) to predict the mediating variable (perceived trustworthiness).

Step 2: Add the independent variable (technical transparency) to the model in Step 1 to test whether technical transparency significantly predicts perceived trustworthiness (to verify the premise of H1 and the first condition for mediation).

Step 3: Use control variables to predict the dependent variable (continued usage intentions) first, then add the independent variable (technical transparency) to test whether technical transparency significantly predicts continued usage intentions (to verify H2 and the second condition for mediation).

Step 4: Add the mediating variable (perceived trustworthiness) to the model in Step 3 to test two key outcomes: (a) whether perceived trustworthiness significantly predicts continued usage intentions (to verify H3 and the third condition for mediation); (b) whether the direct effect of technical transparency on continued usage intentions becomes non-significant (a preliminary indicator of full mediation).

The statistical significance of regression coefficients and changes in explanatory power were used to judge support for each hypothesis.

② Bias-Corrected Bootstrapping Mediation Analysis [30]. To address the limitations of traditional hierarchical regression (e.g., sensitivity to non-normal distributions of indirect effects), Hayes' PROCESS Macro (Model 4) was used to conduct a bias-corrected bootstrapping analysis with 5,000 resamples—an approach widely recognized as the gold standard for modern mediation testing . This analysis quantified:

The indirect effect of technical transparency on continued usage intentions via perceived trustworthiness (including effect value, standard error [SE], and 95% bias-corrected confidence interval [BC CI]).

The direct effect of technical transparency on continued usage intentions (after controlling for perceived trustworthiness) and the total effect of technical transparency on continued usage intentions.

A significant indirect effect was confirmed if the 95% BC CI did not include 0. The claim of “full mediation” was substantiated only when two conditions were met: (a) the direct effect of technical transparency on continued usage intentions became non-significant in the hierarchical regression (Step 4); (b) the 95% BC CI of the indirect effect did not include 0, and the indirect effect accounted for 100% of the total effect (assessed via effect proportion calculation: indirect effect / total effect × 100%).

(4) Robustness and Educational Interpretation

Beyond statistical validation, interpretive analysis was performed to link quantitative outcomes with educational implications. Specifically, mediation results were interpreted as evidence of how transparency-induced trust promotes reflective, sustained, and innovation-oriented learning engagement. This interpretive step ensured that the empirical results were contextualized within the theoretical goal of innovation literacy cultivation rather than limited to behavioral prediction.

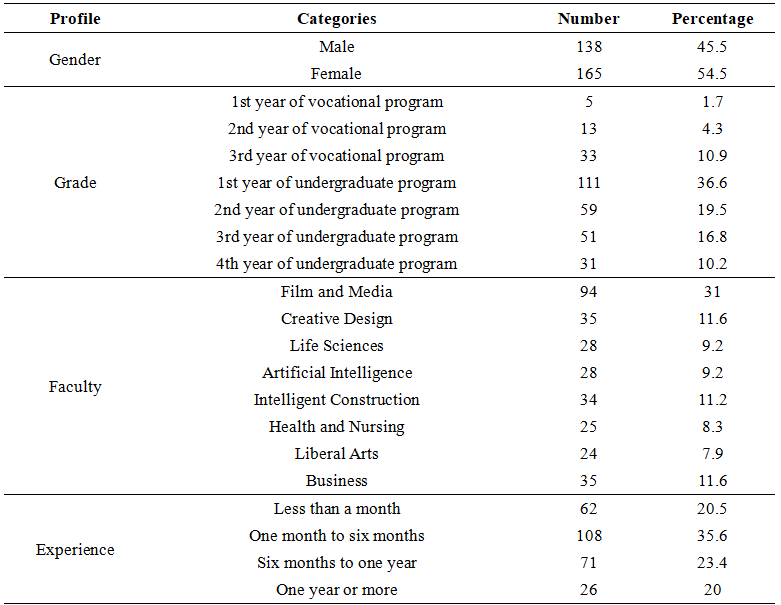

4.1. Demographic Profile

As indicated in Table 1 below, this study examines student demographic factors.

Table 1. Demographic profile

Source: Compiled by this research.

4.2. Reliability and Validity Analysis

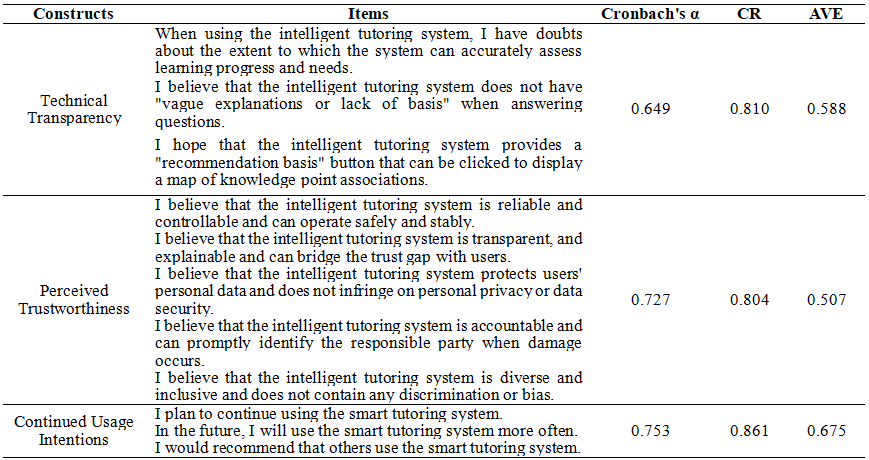

Table 2 summarizes the reliability and validity assessments for the study's measurement scales. Most constructs demonstrate acceptable internal consistency, with Cronbach's α coefficients exceeding the conventional 0.70 threshold. The technical transparency construct reports a slightly lower α value of 0.649; however, given that Cronbach's α is highly sensitive to the number of items and this construct contains only three indicators, the value remains acceptable when interpreted alongside its composite reliability (CR > 0.70) and average variance extracted (AVE > 0.50). For perceived trustworthiness, the AVE of 0.507 is marginally above the recommended cutoff of 0.50 but still indicates adequate convergent validity. To further support scale validity, an exploratory factor analysis (EFA) was conducted rather than a full confirmatory factor analysis, and the results show that all standardized factor loadings exceed 0.70, providing evidence of satisfactory item-level measurement quality.

Regarding mediation testing, this study employed Hayes' PROCESS macro with 5,000 bootstrap samples, which provides a more rigorous and contemporary approach to mediation analysis compared with the traditional Baron and Kenny (1986) procedure. The bootstrap confidence intervals allow more robust inference about indirect effects and strengthen the methodological rigor of the analysis. The sample size of 303 university students meets the requirements for both EFA and PROCESS-based regression models.

To mitigate the potential common method variance (CMV) issue caused by the exclusive use of self-reported data in this study, Harman's single-factor test was employed for CMV detection. The test procedure involved conducting an unrotated exploratory factor analysis (EFA) on all scale items (including items measuring technical transparency, perceived trustworthiness, and continued usage intentions) to examine the variance explained by the first extracted factor. The results showed that the first unrotated factor accounted for only 35.908% of the total variance, which is well below the 40% threshold typically used to identify severe CMV. These findings collectively confirm that there is no significant common method variance in the study's data, thereby ensuring the credibility and validity of the subsequent statistical analyses and empirical conclusions.

Table 2. Reliability for constructs

Source: Compiled by this research.

4.3. Correlation Analysis

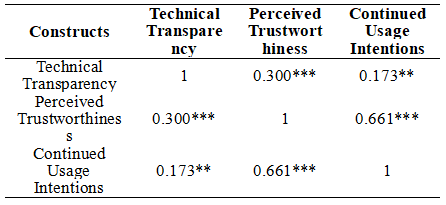

To examine the linear relationships among the key variables, Pearson correlation coefficients were calculated. The analysis reveals significant positive correlations among Transparency, Trustworthiness, and Intentions, with all coefficients achieving statistical significance at the conventional level.

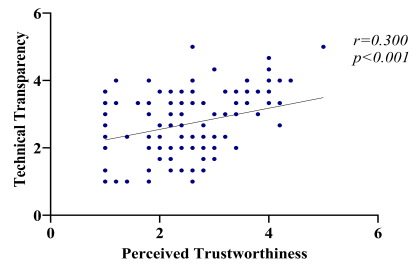

First, Transparency is moderately and positively correlated with Trustworthiness (r=0.300, p<0.001), indicating that higher levels of technical transparency are associated with higher user-perceived trustworthiness of intelligent tutoring systems. This finding provides initial support for H1, which proposes that technical transparency enhances users' perceived trustworthiness.

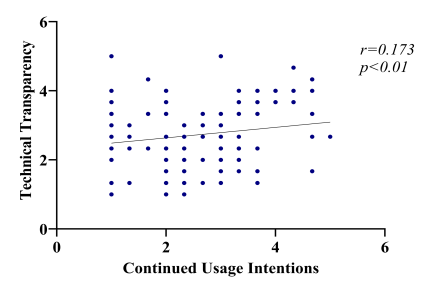

Second, Transparency shows a significant but weaker positive correlation with Intentions (r=0.173, p<0.01). This suggests that increasing the transparency of intelligent tutoring systems may positively influence users' willingness to continue using them, thereby offering preliminary evidence for H2.

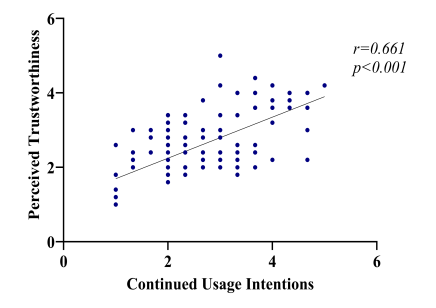

Third, Trustworthiness is strongly and positively correlated with Intentions (r=0.661, p<0.001), representing the strongest relationship among all variables. This result implies that users' perceived trustworthiness plays a crucial role in shaping their continued usage intentions,providing robust support for H3.

Overall, the correlations among the three variables align with the theoretical expectations of the proposed model. The significant positive relationships indicate that technical transparency not only directly influences user intentions but is also closely associated with users' trust perceptions, forming a theoretical basis for testing the mediating role of trustworthiness in H4.

Table 3. Correlation Analysis

Note: *p<0.05; **p<0.01; ***p<0.001.

Figure 2. The Correlation Between Technical Transparency and Perceived Trustworthiness.

Figure 3. The Correlation Between Technical Transparency and Continued Usage Intentions.

Figure 4. The Correlation Between Perceived Trustworthiness and Continued Usage Intentions.

4.4. Regression Analysis

4.4.1. Testing the Effect of Technical Transparency on Perceived Trustworthiness

The first hypothesis proposes that technical transparency positively influences users' perceived trustworthiness of intelligent tutoring systems. This expectation aligns with prior theoretical arguments suggesting that when a system's internal processes, decision mechanisms, and instructional logic are made explicit, users experience reduced cognitive uncertainty and greater interpretability of system behavior. Such transparency strengthens perceptions of system competence, reliability, and ethical accountability—core components of trust formation in human–AI interaction.

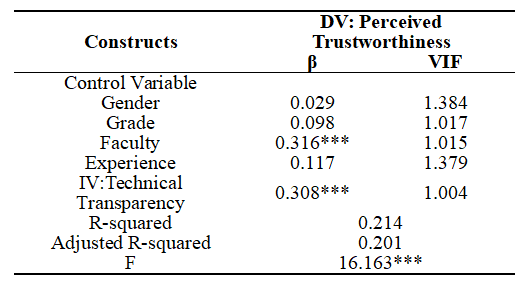

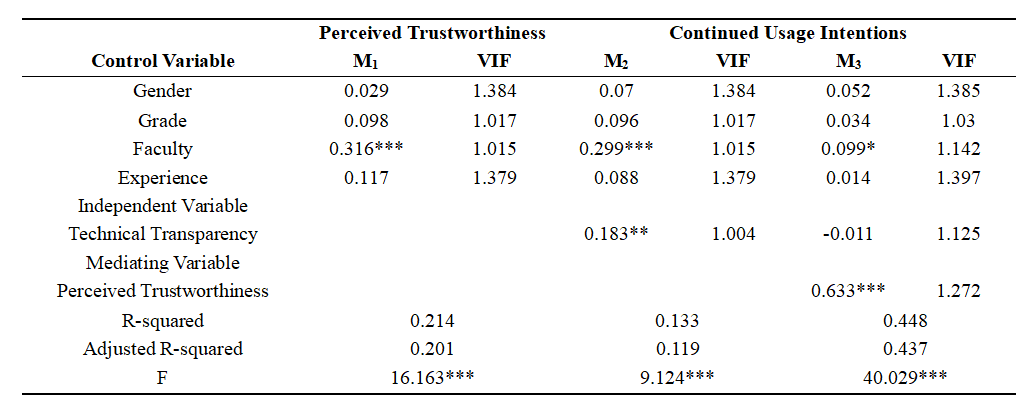

To empirically test this relationship,a regression analysis was conducted with perceived trustworthiness as the dependent variable and gender, grade, faculty, and prior experience included as control variables. As shown in Table 4, the overall model is statistically significant (F=16.163), explaining 21.4% of the variance in perceived trustworthiness (R²=0.214; Adjusted R²=0.201). Among the control factors, only faculty exhibits a significant effect (β=0.316, p<.001), while the remaining demographic variables show no meaningful influence.

Consistent with theoretical expectations,technical transparency demonstrates a significant positive effect on perceived trustworthiness (β=0.308, p<0.001). This result empirically confirms that when learners are provided with clear and interpretable information about how the intelligent tutoring system functions, they are more likely to perceive the system as fair, competent, and pedagogically aligned with their learning needs. Such perceptions reflect the psychological and ethical dimensions of trust formation described, where transparency reduces ambiguity and enhances ethical assurance during human–AI interaction.

These findings provide strong support for H1, reinforcing the theoretical logic that transparency is not merely a usability feature but a foundational determinant of trust in intelligent learning environments. By fostering cognitive clarity and moral assurance, technical transparency plays a pivotal role in shaping learners' willingness to rely on intelligent tutoring systems—laying the groundwork for the downstream effects on engagement and innovation literacy development addressed in subsequent hypotheses.

Table 4. Technical Transparency To Perceived Trustworthiness Regression Analysis

Note: *p<0.05; **p<0.01; ***p<0.001.

4.4.2. Testing the Effect of Technical Transparency on Continued Usage Intentions

The second hypothesis posits that technical transparency positively influences learners' continued usage intentions toward intelligent tutoring systems. Transparency enhances users' understanding of system operations, strengthens their sense of control, and facilitates reflective and self-regulated learning. When learners can interpret how feedback is generated and on what basis the system makes pedagogical decisions, they are more inclined to view the interaction as meaningful and manageable. This sense of interpretability and agency encourages behavioral continuity, which aligns with innovation literacy theory emphasizing curiosity, adaptability, and iterative learning as cornerstones of sustained engagement.

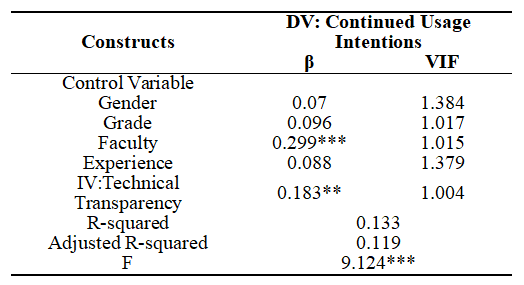

To empirically examine this relationship, a multiple regression analysis was conducted with continued usage intentions as the dependent variable and demographic characteristics (gender, grade, faculty, and prior experience) included as control variables. As shown in Table 5, the overall model is statistically significant (F=9.124), accounting for 13.3% of the variance in continued usage intentions (R²=0.133; Adjusted R²=0.119). Among the control variables, faculty again exerts a significant positive effect (β=0.299, p<0.001), while gender, grade, and experience do not significantly predict continued usage intentions.

Consistent with theoretical expectations, technical transparency has a significant and positive effect on continued usage intentions (β=0.183, p<0.01). Although the effect size is smaller than that observed for perceived trustworthiness, the relationship remains robust and meaningful. This result suggests that when learners have clearer insight into how the intelligent tutoring system functions, they are more willing to maintain long-term engagement. This empirical finding supports the theoretical mechanism described earlier: transparency promotes comprehension and reduces ambiguity, thereby strengthening learners' motivation to repeatedly interact with the system.

All VIF values range from 1.004 to 1.384, indicating no multicollinearity concerns.

Taken together, these findings provide strong empirical support for H2, demonstrating that transparency serves not only as a cognitive aid but also as a motivational enabler of sustained engagement in technology-enhanced learning environments.

Table 5. Technical Transparency To Continued Usage Intentions Regression Analysis

Note: *p<0.05; **p<0.01; ***p<0.001.

4.4.3. Testing the Effect of Technical Transparency on Continued Usage Intentions

The third hypothesis predicts that perceived trustworthiness significantly enhances learners' continued usage intentions toward intelligent tutoring systems. Perceived trustworthiness operates as an affective and ethical bridge that transforms cognitive understanding into behavioral persistence. When learners believe that the system is fair, reliable, and pedagogically aligned with their learning goals, they are more inclined to rely on its feedback, engage in reflective learning, and maintain long-term interaction. Trust, therefore, functions not only as an emotional evaluation but also as a motivational driver that encourages exploration, perseverance, and innovation-oriented engagement in technology-mediated learning environments.

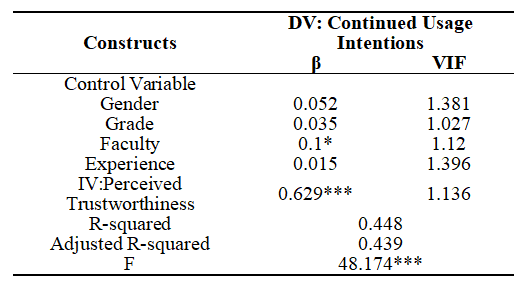

To validate this theoretical expectation, a multiple regression analysis was conducted with continued usage intentions as the dependent variable and demographic characteristics (gender, grade, faculty, and prior experience) included as control factors. As shown in Table 6, the overall model is highly significant (F = 48.174), accounting for 44.8% of the variance in continued usage intentions (R² = 0.448; Adjusted R² = 0.439). Unlike the models for H1 and H2, the explanatory power of this model is substantially higher, indicating that perceived trustworthiness is a dominant predictor of sustained engagement.

Among the control variables, faculty shows a modest but statistically significant effect (β= 0.100, p < 0.05), suggesting that learners from different academic units may vary in their tendency to maintain long-term system use. Gender, grade, and experience do not exhibit significant influence.

Crucially, perceived trustworthiness demonstrates a strong and highly significant positive effect on continued usage intentions (β= 0.629, p < 0.001). This coefficient represents the strongest effect observed across all regression models in this study, underscoring the central role of trust in shaping learners' commitment to continued interaction. The magnitude of this effect indicates that trust functions as a primary mechanism through which learners decide whether to rely on and repeatedly engage with intelligent tutoring systems.

All VIF values range from 1.027 to 1.396, indicating no multicollinearity concerns.

These findings provide compelling empirical support for H3, confirming that perceived trustworthiness is a powerful determinant of learners' continued usage intentions. By fostering emotional assurance and perceived ethical reliability, trust serves as a foundational psychological condition that sustains learners' engagement in intelligent learning environments.

Table 6. Perceived Trustworthiness To Continued Usage Intentions Regression Analysis

Note: *p<0.05; **p<0.01; ***p<0.001.

4.5. Mediation Test: TT→PT→CUI (PROCESS Model 4)

To examine the mediating role of Perceived Trustworthiness (PT) in the relationship between Technical Transparency (TT) and Continued Usage Intentions (CUI), this study first employed hierarchical multiple regression analysis to verify the basic relationships among variables (Table 9), then utilized Hayes' PROCESS Macro (Model 4) with 5000 bootstrap resamples for mediation effect testing—a standard approach in academic research.

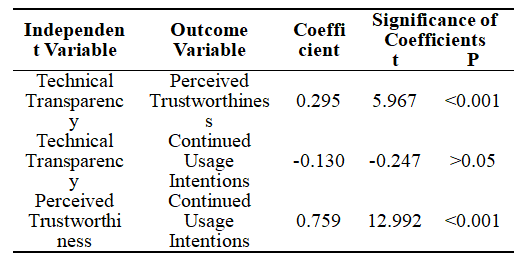

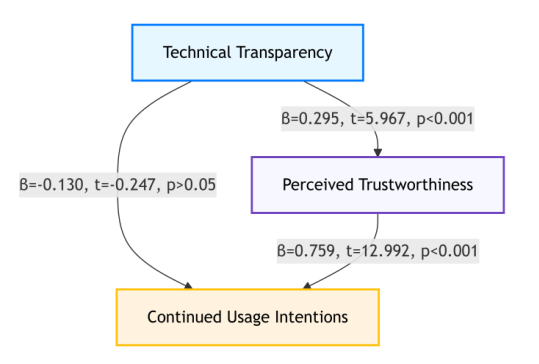

First, the path coefficients of the model were analyzed (Table 7; Figure 5): The direct effect of TT on PT was statistically significant (β = 0.295, t = 5.967, p < 0.001), meaning higher TT significantly increases PT. In contrast, TT's direct effect on CUI was non-significant (β = -0.130, t = -0.247, p > 0.05), indicating no direct link between TT and CUI. Additionally, PT's effect on CUI was strongly significant (β = 0.759, t = 12.992, p < 0.001), showing PT positively predicts CUI.

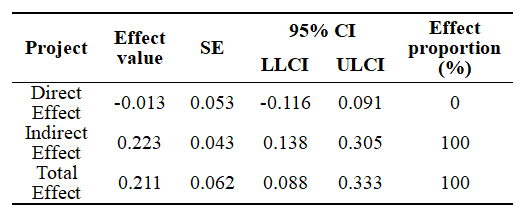

Bootstrap results for effect decomposition revealed (Table 8): The direct effect of TT on CUI was -0.013 (SE = 0.0526), with a 95% confidence interval (CI) of [-0.1164, 0.0905] (including 0, thus non-significant). The indirect effect (TT→PT→CUI) was 0.223 (SE = 0.0426), with a 95% CI of [0.1376, 0.3049] (excluding 0, thus significant). The total effect of TT on CUI was 0.211 (SE = 0.0621, 95% CI = [0.0882, 0.3328], significant).

In terms of effect proportion, the indirect effect accounted for 100% of the total effect, while the direct effect contributed 0%. These results, consistent with the hierarchical regression findings (Table 9), support a full mediation mechanism: TT does not directly influence CUI, but exerts a positive impact on CUI entirely through enhancing PT. This clarifies that technical transparency shapes users' continued usage intentions by fostering their perceived trustworthiness, as illustrated by the path coefficient results (Table 7; Figure 5) and mediation effect decomposition (Table 8).

As the visual summary of the study's empirical findings, Figure 6 clearly maps the validated relationships among the core variables.

Table 7. Path Coefficients & Significance: TT, PT, and CUI

Figure 5. Path Coefficients & Significance: TT, PT, and CUI

Table 8. Mediation Effect Decomposition Results: TT→PT→CUI

Table 9. Hierarchical Multiple Regression Analysis

Note: *p<0.05; **p<0.01; ***p<0.001.

Figure 6. Final model structure

5.1. Research Conclusions

This study set out to explore the psychological and behavioral mechanisms through which technical transparency influences students' continued usage intentions toward intelligent tutoring systems (ITS), with particular attention to the mediating role of perceived trustworthiness. By integrating the Unified Theory of Acceptance and Use of Technology (UTAUT) with the emerging paradigm of innovation literacy cultivation, the study sought to construct a comprehensive model that explains not only why students continue to use AI-based learning systems, but also how system design features contribute to reflective, autonomous, and innovation-oriented learning behaviors.

The empirical findings provide robust support for all four hypotheses. First, technical transparency was shown to exert a significant positive effect on perceived trustworthiness (H1). This confirms the theoretical argument that when learners can interpret algorithmic logic, feedback rationales, and system operations, their cognitive uncertainty is reduced, and their confidence in the system's fairness, capability, and benevolence increases. Transparency thus functions as a cognitive and ethical anchor that helps students make sense of AI-mediated learning environments.

Second, technical transparency also significantly predicted continued usage intentions (H2), though the effect was more modest than its influence on trust. This finding suggests that transparency helps students understand and regulate their learning processes, allowing them to perceive value and meaning in their interaction with intelligent tutoring systems. However, the relatively smaller coefficient also indicates that transparency alone is insufficient to ensure long-term engagement, pointing toward the existence of deeper affective mechanisms at play.

Third, perceived trustworthiness had the strongest positive influence on continued usage intentions (H3), with a coefficient far exceeding that of transparency. This result highlights the central role of trust in shaping whether students persist in using intelligent tutoring systems. Trust serves not only as an evaluative judgment of system reliability but also as an emotional foundation that empowers learners to rely on AI feedback, engage in reflective learning, explore complex tasks, and overcome cognitive challenges. The strength of this relationship underscores trust as a decisive determinant of sustained behavioral engagement in AI-mediated education.

The hierarchical regression results reveal a full mediation effect for perceived trustworthiness (H4). When trust was added to the model, the previously significant effect of transparency on continued usage intention became non-significant, indicating that the influence of transparency operates entirely through trust. Thus, transparency enhances engagement not by directly encouraging students to use the system, but by enabling them to form stable and positive trust perceptions. This finding contributes to both technology acceptance theory and innovation literacy research by clarifying that trust is the essential psychological bridge that connects cognitive comprehension with behavioral persistence.

Taken together, these findings reflecting the cognitive–affective–behavioral progression that underlies innovation literacy cultivation. Transparent systems support reflective understanding; trust fosters emotional assurance and moral confidence; sustained usage enables repetitive practice, creative risk-taking, and self-directed learning—key components of innovation-oriented education. The study thereby contributes to a deeper understanding of how trustworthy AI systems can serve as catalysts for high-quality talent development in higher education.

5.2. Managerial Implications

The results of this study generate several practical recommendations for educational institutions, system designers, and policymakers seeking to harness the pedagogical potential of intelligent tutoring systems.

First, system developers should prioritize transparent and explainable design principles. The empirical evidence clearly shows that transparency significantly increases perceived trustworthiness and indirectly fosters long-term engagement. Developers should therefore incorporate features that make system reasoning accessible to users—for example, “explain my score” functions, visual representations of knowledge-point associations, traceable feedback paths, and clear disclosures about how learner data are used. Such design elements not only improve usability but also fulfill ethical obligations to ensure fairness, accountability, and student autonomy.

Second, educators should actively cultivate a trust-supportive learning environment. The strong effect of perceived trustworthiness on continued usage intention indicates that trust is not merely a product of system design; it is also shaped by instructional practices. Teachers can enhance trust by guiding students in interpreting AI feedback, discussing algorithmic logic, and demonstrating how to critically evaluate automated recommendations. Integrating digital ethics and algorithm literacy into the curriculum can further help students develop balanced trust—neither naive reliance nor excessive skepticism. A trustful learning climate encourages reflective inquiry, experimentation, and resilience, ultimately supporting the development of innovation literacy.

Third, institutional leaders should develop governance frameworks that align transparency with educational accountability. Universities can promote trustworthy AI adoption by establishing clear policies related to privacy protection, algorithmic fairness, data governance, and student rights. Institutional transparency reports, disclosure protocols, and user-feedback mechanisms can strengthen public confidence in AI-enabled learning environments. Such governance structures will not only enhance system credibility but also support national educational strategies emphasizing innovation-driven development and high-quality talent cultivation.

Fourth, the findings highlight the importance of linking AI system design to innovation literacy goals. Continued usage intention, when supported by trust, reflects deeper cognitive engagement, adaptive learning behaviors, and innovation-oriented mindsets. Therefore, universities should not treat ITS implementation as merely a technological upgrade, but recognize it as an educational strategy to foster creativity, critical thinking, and ethical awareness. Transparent, trustworthy, and student-centered AI systems can play a pivotal role in transforming passive learning into active, inquiry-driven, and innovative exploration.

5.3. Research Limitations and Future Research Directions

Despite offering meaningful theoretical and practical contributions, this study is subject to several limitations that suggest promising avenues for future research—with a critical constraint lying in its cross-sectional design, which impacts causal inference and links to long-term innovation literacy development.

First, the study relies on cross-sectional questionnaire data collected at a single time point from a single university context. While the sample size of 303 respondents is adequate for regression and mediation analysis (via Hayes' PROCESS Macro), cross-sectional data cannot capture the temporal dynamics of variables: it cannot confirm whether technical transparency precedes trust formation, or whether sustained usage intention evolves into actual innovation-oriented learning behaviors over time. This constrains strong causal claims about the transparency–trust–intention chain, particularly when linking the model to innovation literacy—a construct that develops gradually through repeated reflective engagement and iterative learning. Unlike longitudinal data, which could track how trust and usage patterns shape innovation-related competencies (e.g., critical thinking, creative problem-solving) over semesters or academic years, cross-sectional data only provides a snapshot of relationships, limiting inferences about long-term educational impact. Additionally, the single-institution sample may introduce contextual bias, and common-method variance could inflate observed correlations. Future studies should adopt longitudinal tracking designs across multiple universities to validate causal directions, observe how transparency and trust evolve with ITS familiarity, and examine their long-term associations with innovation literacy.

Second, the operationalization of technical transparency reflects users' perceived transparency rather than objective system transparency. Perceptions can be influenced by interface design, communication style, or prior experience, which may not fully represent the underlying technical architecture. Future research could employ experimental designs to manipulate different levels of objective transparency and test their differential effects on trust and usage—distinguishing between superficial explainability (e.g., simple feedback labels) and substantive algorithmic openness (e.g., accessible decision logic).

Third, the current study focuses on behavioral intention (continued usage) rather than objective behavioral data or direct innovation literacy measures. Although intention is a strong predictor of actual behavior, it does not fully capture real usage patterns (e.g., frequency of reflective interaction with ITS) or learning performance. Moreover, the link between continued usage and innovation literacy remains empirically indirect: the study frames usage intention as a behavioral proxy for innovation engagement, but lacks data on proximal outcomes like reflective learning frequency, innovation-related self-efficacy, or creative problem-solving performance. Future research should integrate multi-source data to measure both behavioral engagement and direct innovation literacy indicators—strengthening the empirical connection between the transparency–trust model and educational outcomes.

Fourth, the model focuses on perceived trustworthiness as the sole mediator. While the PROCESS-mediated analysis confirms its full mediating role, learners' continued engagement may also be influenced by other psychological mechanisms such as perceived usefulness, learning satisfaction, or digital literacy. Future research could develop extended models incorporating additional mediators (e.g., “reflective learning self-efficacy”) or moderators (e.g., “AI familiarity,” “disciplinary differences in innovation demands”) to deepen understanding of individual differences in how learners respond to transparent ITS.

Finally, the study does not directly measure innovation literacy outcomes. Although the theoretical framework links transparency and trust to innovation literacy cultivation, the empirical model relies on indirect proxies (continued usage intention). Future research should explicitly include validated measures of innovation literacy (e.g., the Innovation Competence Scale for Higher Education Students, or assessments of ethical AI use in creative tasks) to test whether the transparency–trust–usage chain directly predicts innovation-related competencies. This would transform the model from a behavioral prediction framework into a more comprehensive educational impact model, aligning with the study's overarching goal of linking ITS design to innovation-driven talent cultivation.

Funding: This research was supported by the following grants: 1. Research on the Evaluation and Improvement Mechanism for College Students' Innovation and Entrepreneurship Competencies in the Context of Educational Digital Intelligence, Research and Development Fund of the Private Quality Education Research Center, Grant No. X2024ZS001.

2. Research on the Dynamic Evaluation and Enhancement Mechanism of College Students' Innovation Literacy Empowered by Digital-Intelligent Education, Key Project of the Hubei Education Science Planning Program (2025), Grant No. 2025GA114.

Ethical Approval: Not applicable.

Informed Consent Statement: Not applicable.

Data Availability Statement: Not applicable.

Acknowledgments: None.

Conflicts of Interest: The authors declare no conflicts of interest.

Akhter, E. AI in the Classroom: Evaluating the Effectiveness of Intelligent Tutoring Systems for Multilingual Learners in Secondary Education. ASRC Procedia Glob. Perspect. Sci. Scholarsh. 2025, 1(01), 532–563.

Boud, D.; Bearman, M. The Assessment Challenge of Social and Collaborative Learning in Higher Education. Educ. Philos. Theory 2024, 56(5), 459–468.

Majjate, H.; Bellarhmouch, Y.; Jeghal, A.; Yahyaouy, A.; Tairi, H.; Zidani, K.A. Assessing the Impact of Ethical Aspects of Recommendation Systems on Student Trust and Engagement in E-Learning Platforms: A Multifaceted Investigation. Educ. Inf. Technol. 2025, 30(3), 3953–3977.

Jiang, J.; Li, E.Y.; Tang, L. A Meta-Analysis of Antecedents and Consequences of Trust in the Sharing Economy. Internet Res. 2024, 34(6), 2257–2297.

Shahzad, M.F.; Xu, S.; Baheer, R. Assessing the Factors Influencing the Intention to Use Information and Communication Technology Implementation and Acceptance in China’s Education Sector. Humanit. Soc. Sci. Commun. 2024, 11(1), 1–15.

Zhang, H.; Leong, W.Y. Industry 5.0 and Education 5.0: Transforming Vocational Education through Intelligent Technology. J. Innov. Technol. 2024, 2024(16), 1–9.

Wischnewski, M.; Kramer, N.; Janiesch, C.; Muller, E.; Schnitzler, T.; Newen, C. In Seal We Trust?: Investigating the Effect of Certifications on Perceived Trustworthiness of AI Systems. Hum.-Mach. Commun. 2024, 8, 141–162.

Shan, Z.; Wang, Y. Strategic Talent Development in the Knowledge Economy: A Comparative Analysis of Global Practices. J. Knowl. Econ. 2024, 15(4), 19570–19596.

Zhai, C.; Wibowo, S.; Li, L.D. The Effects of Over-Reliance on AI Dialogue Systems on Students' Cognitive Abilities: A Systematic Review. Smart Learn. Environ. 2024, 11(1), 28.

Alarcon, G.M.; Capiola, A.; Lee, M.A.; Willis, S.; Hamdan, I.A.; Jessup, S.A.; Harris, K.N. Development and Validation of the System Trustworthiness Scale. Hum. Factors 2023, 66(7), 1893–1913.

Hsieh, S.H.; Lee, C.T. The AI Humanness: How Perceived Personality Builds Trust and Continuous Usage Intention. J. Prod. Brand Manag. 2024, 33(5), 618–632.

Bach, T.A.; Khan, A.; Hallock, H.; Beltrão, G.; Sousa, S. A Systematic Literature Review of User Trust in AI-Enabled Systems: An HCI Perspective. Int. J. Hum.-Comput. Interact. 2024, 40(5), 1251–1266.

Létourneau, A.; Deslandes Martineau, M.; Charland, P.; Karran, J.A.; Boasen, J.; Léger, P.M. A Systematic Review of AI-Driven Intelligent Tutoring Systems (ITS) in K-12 Education. NPJ Sci. Learn. 2025, 10(1), 29.

Goel, S. Earnings Management is “Theses Management” in Management Educational Research: A Review of Ethics for Behavioural Psychology. Acta Psychol. 2025, 258, 105216.

Yu, J.H.; Chauhan, D.; Iqbal, R.A.; Yeoh, E. Mapping Academic Perspectives on AI in Education: Trends, Challenges, and Sentiments in Educational Research (2018–2024). Educ. Technol. Res. Dev. 2025, 73(1), 199–227.

Wang, X.; Qiu, X. The Positive Effect of Artificial Intelligence Technology Transparency on Digital Endorsers: Based on the Theory of Mind Perception. J. Retail. Consum. Serv. 2024, 78, 103777.

Cheong, B.C. Transparency and Accountability in AI Systems: Safeguarding Wellbeing in the Age of Algorithmic Decision-Making. Front. Hum. Dyn. 2024, 6, 1421273.

Singhal, A.; Neveditsin, N.; Tanveer, H.; Mago, V. Toward Fairness, Accountability, Transparency, and Ethics in AI for Social Media and Health Care: Scoping Review. JMIR Med. Inform. 2024, 12(1), e50048.

Barman, K.G.; Wood, N.; Pawlowski, P. Beyond Transparency and Explainability: On the Need for Adequate and Contextualized User Guidelines for LLM Use. Ethics Inf. Technol. 2024, 26(3), 47.

Lu, Y.; Wang, D.; Chen, P.; Zhang, Z. Design and Evaluation of Trustworthy Knowledge Tracing Model for Intelligent Tutoring System. IEEE Trans. Learn. Technol. 2024, 17, 1661–1676.

Salgado, S.; Filieri, R.; Chameroy, F. Beyond Unidimensional Trust and User Roles: A Multidimensional Role-Based Approach to Trust. J. Travel Res. 2024, 00472875241305628.

Pauer, S.; Rutjens, B.T.; van Harreveld, F. Trust is Good, Control is Better: The Role of Trust and Personal Control in Response to Threat. J. Appl. Soc. Psychol. 2024, 54(9), 552–571.

Wan, Y.; Gao, Y.; Hu, Y. Blockchain Application and Collaborative Innovation in the Manufacturing Industry: Based on the Perspective of Social Trust. Technol. Forecast. Soc. Change 2022, 177, 121540.

Andriyani, Y.; Yohanitas, W.A.; Kartika, R.S. Adaptive Innovation Model Design: Integrating Agile and Open Innovation in Regional Areas Innovation. J. Open Innov. Technol. Mark. Complex. 2024, 10(1), 100197.

Yu, C.; Yan, J.; Cai, N. ChatGPT in Higher Education: Factors Influencing ChatGPT User Satisfaction and Continued Use Intention. Front. Educ. 2024, 9, 1354929.

Du, W.; Liang, R. Teachers’ Continued VR Technology Usage Intention: An Application of the UTAUT2 Model. SAGE Open 2024, 14(1), 21582440231220112.

Oiku, P.O. Innovation and Organisational Resilience among Small and Medium-Sized Enterprises in Lagos State. Int. J. Bus. Technopreneurship 2024, 14(1), 35–48.

Nepal, R.; Zhao, X.; Dong, K.; Wang, J.; Sharif, A. Can Artificial Intelligence Technology Innovation Boost Energy Resilience? The Role of Green Finance. Energy Econ. 2025, 142, 108159.

García-Aracil, A.; Isusi-Fagoaga, R.; Planells-Aleixandre, E. Students’ Perspectives of Alignment between Teaching–Learning Methods and the Promotion of Social Innovation Competencies. High. Educ. Res. Dev. 2024, 43(7), 1479–1494.

Hayes, A.F. Introduction to Mediation, Moderation, and Conditional Process Analysis: A Regression-Based Approach, 2nd ed.; The Guilford Press: New York, NY, USA, 2018.